As someone who is working with Docker Container images, you are here for one of the following reasons:

- You came across the new Docker Hub pull rate limit;

- You encountered the error message

You have reached your pull rate limitAnyway, you are now desperate to find a solution.

docker pull wordpress:latest

Error response from daemon: pull access denied for wordpress, repository does not exist or may require ‘docker login’:

denied: You have reached your pull rate limit. You may increase the limit by authenticating and upgrading: https://www.docker.com/increase-rate-limit

Look no further as we show you three different ways for overcoming the Docker Hub pull rate limit.

Docker Hub Rate Limit Consequences

In order to evaluate the solutions, let’s try to understand how the rate limit works. The current limits as for December, 13th 2023 are the following:

100 pull requests per 6 hours for anonymous users on a free plan. Enforced based on your IP Address.

200 pull requests per 6 hours for authenticated users on a free plan.

Up to 5000 pulls per day for paying customers.

At first glance, 100/200 pulls per 6 hours do not look too restrictive until you find out how Docker Hub measures the

rate limit.

Docker Hub GET request sent to registry manifest URLs (/v2/*/manifests/*)

against your quota.

In plain English, every docker pull command execution counts against your quota regardless if the requested image is

up to date or not.

For instance, if you execute docker pull alpine twice, you come two steps closer to exhausting your rate limit.

Even if on the second command execution no image was transferred, two pull requests referring to the same image tag

count as two and not one.

Hitting the request limit is a piece of cake if you deploy your application stack to a cluster that’s behind a NAT or if

you use Always as your pull policy.

If you want to dive into details and see what your current quota is, the Download Rate Limit section in the Docker Hub documentation is a good starting point.

How to Overcome Docker Hub Pull Rate Limit

#1 Subscribe to Docker Hub

This will allow you to increase the pull limit for authenticated users and make it unlimited for anonymous ones. If you are an individual or a small team of 2-10 people who just need a space to store images, then paying $5 to $7/month per user is the simplest solution.

Indeed, you didn’t come here for this recommendation. Still, keep in mind that Docker Hub is de facto the go-to place for all open-source software binaries and is free for public images. The pricing for unlimited private repositories is fair as well.

#2 Mirror Images to Your Own Registry

Mirroring or copying images from Docker Hub to your own registry might seem like overkill at first glance. However, it has two major benefits for security and governance and is considered a best practice, especially for using containers in an enterprise context.

Security

- You get a better overview of image vulnerabilities and can scan images before deployment.

- You can enable polices to prevent vulnerable container images from deployment.

Governance

- You get a clear overview of all container images, software versions, and licenses.

- You can be sure that 3rd party images can’t be modified in retrospect.

Methods of Mirroring Images from One Registry to Another

Apart from mirroring images using a Docker CLI with pull andpush commands, two solutions exist for replication

process automation.

- Harbor & Container Registry built-in image replication feature with policies and event rules.

- Skopeo to synchronize images between different container registries. Can run as a part of a continuous integration workflow.

In the case of Harbor, your images will be “backed-up” in a new registry, and you can pull them directly from there.

#3 Proxy to Docker Hub

The third option is pretty similar to option #2 but there are no replication rules needed. Yet you get the same benefits of security and governance. In this scenario, you create a so-called proxy cache project that will store your last used images automatically. They can be later pulled from the proxy cache without touching the Docker Hub limit.

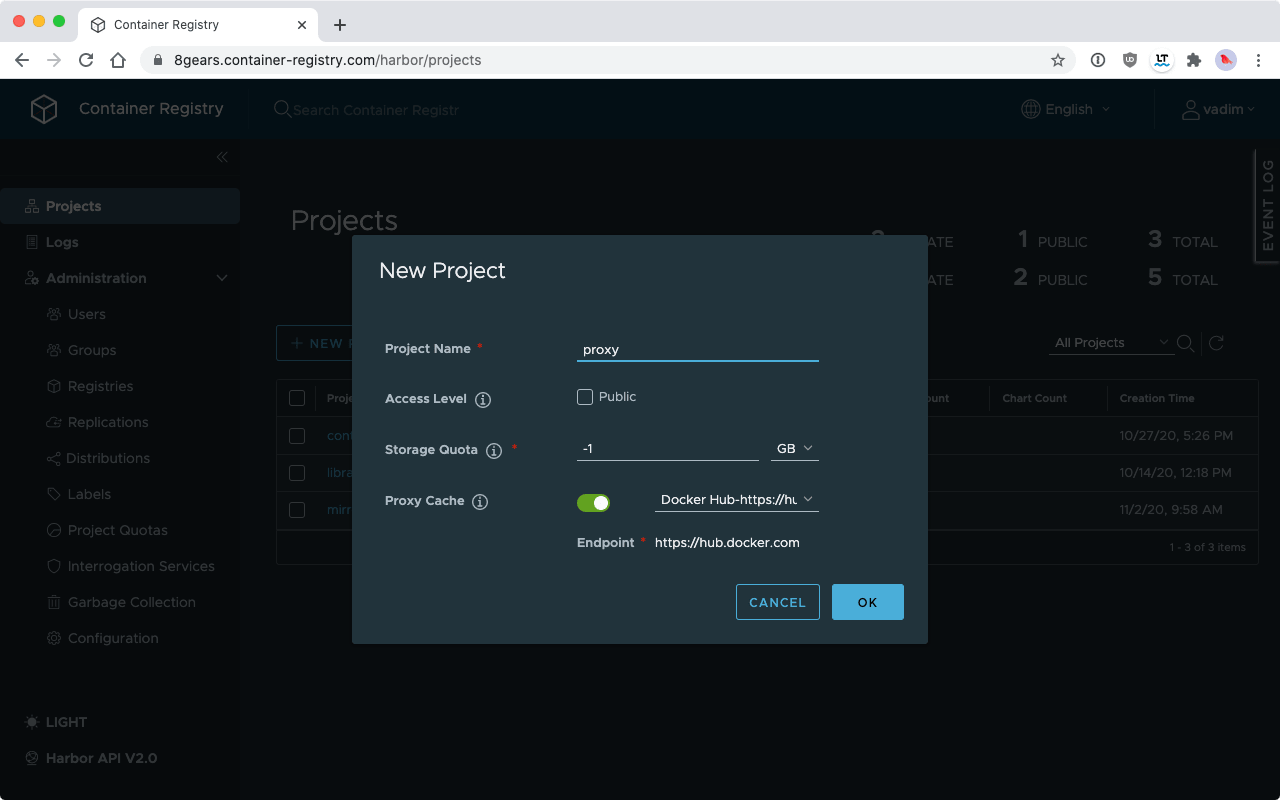

Proxy cache is a feature of our Dedicated Container Registry Service based on Harbor 2.1. It is easy to set up: as a system admin, simply enable Proxy Cache while adding a new project and enter the link to a registry to cache.

Creating a Proxy for Docker Hub

After the Proxy Cache is set up, you can test it by pulling an image via the cache. You need to add your registry name

and project as a prefix. For example, if you used docker pull postgres:13 to pull from Docker Hub before, you should

now use docker pull c8n.io/proxy/postgres:13 to pull images from the proxy.

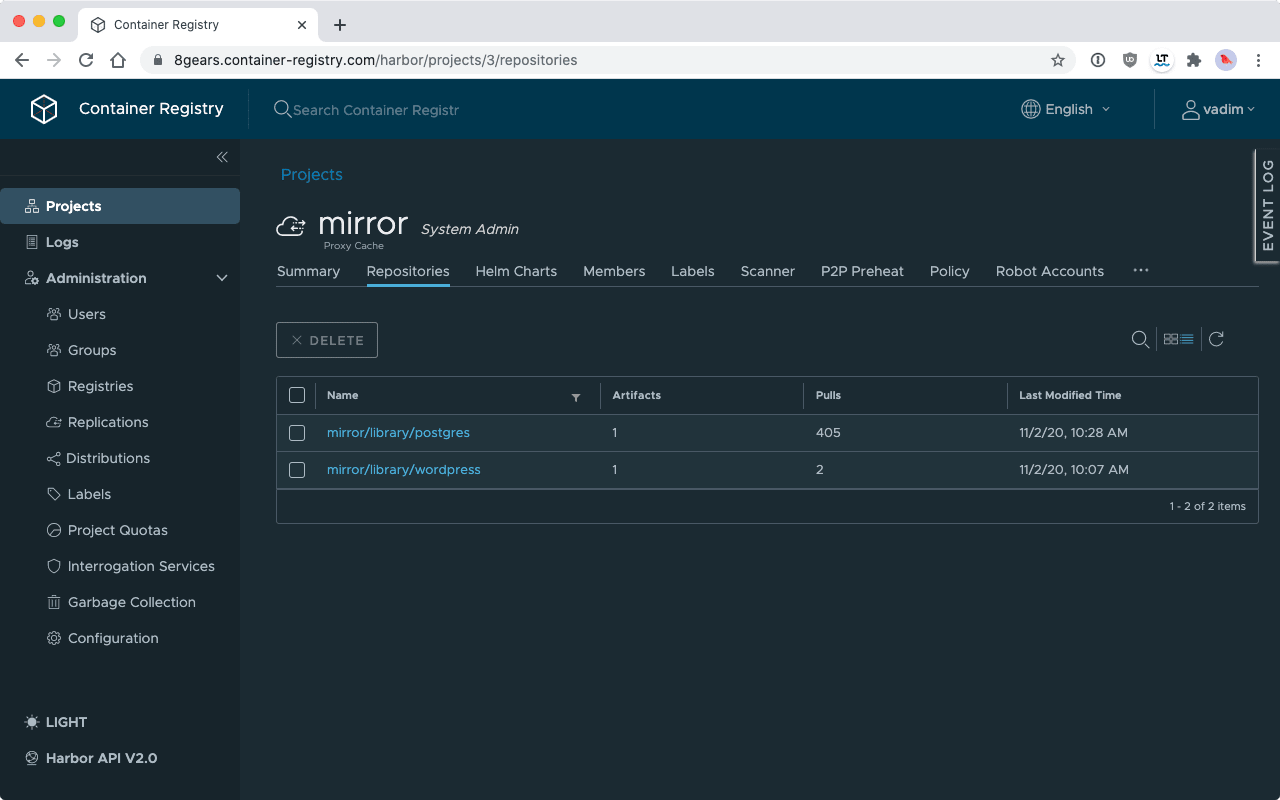

List of All Proxied Images

How to Make Sure That You Only Pull Images from Your Own Registry

For the policy to work as desired it is necessary to enforce that we fetch images from the private registry and never from Docker Hub. For Kubernetes, two solutions exist to simplify the workflow.

Portieris is a Kubernetes admission controller to enforce image security policies. You can create image security policies for each Kubernetes Namespace, or at the cluster level, and enforce different rules for different images.

Kubernetes Dynamic Admission Control allows you to define callbacks and rewrite image specs to point to your internal registry. There is no ready-made solution yet,

Working with Registry Image Secrets

Having unauthenticated access to the container registry is convenient: no passwords no users, it just works. The registry credential operator tries to auto inject secrets into the right places.

- The registry-creds operator will propagate a single ImagePullSecret to all Namespaces within your cluster, so we can pull images with authentication.

Closing Words

As you saw, with the right tools it is possible to overcome the Docker Hub pull limit.

While this seems to be a yet uncommon to use a proxy with Docker Hub, the method stands good chances to become a norm. Container users were putting all their eggs (or containers) into the same basket for too long by fetching all their base images from only one place.

Now, we see the fragility of the system. Developers must regain control of all images they use and store them in their own registry. A proxy project is a neat solution for this.

Container Management Solution for Teams

Our Harbor based Container Management Solution can proxy and replicate Container Images from Docker Hub and other Registries.

Discover our offerRelated Sources

- Download rate limit / Official Docker documentation

- Image Pull Rate / Official Pricing Plans

- “Checking Your Current Docker Pull Rate Limits and Status” by Peter McKee, published on the official Docker blog